Understanding Large Language Models (LLMs)

Overview

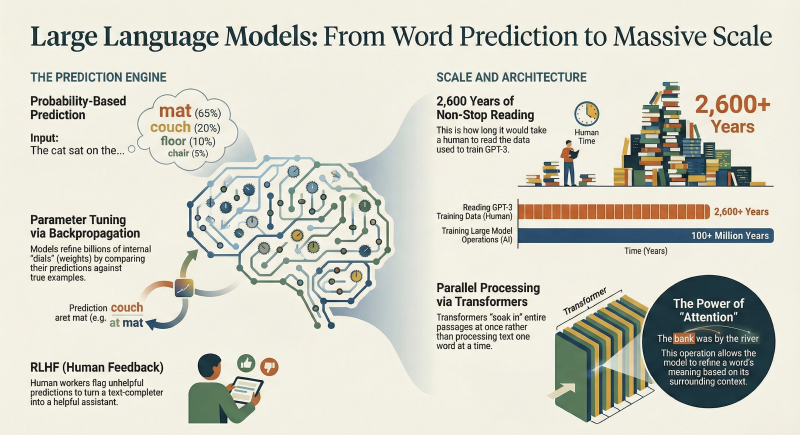

Large Language Models (LLMs) are sophisticated mathematical functions designed to predict the next word in a sequence of text by assigning probabilities to all possible outcomes. These models are built upon the Transformer architecture, which enables parallel processing of entire text blocks for enhanced efficiency.

Key Insights

- LLMs function as probabilistic next-word predictors, assigning likelihoods to all potential subsequent words.

- The Transformer architecture is foundational, allowing LLMs to process entire text segments concurrently rather than sequentially.

- The attention mechanism within Transformers refines word meanings based on their surrounding context.

- LLM behavior is dictated by hundreds of billions of parameters (weights).

- Training comprises pre-training on massive internet datasets via backpropagation, followed by Reinforcement Learning with Human Feedback (RLHF) to align models with human preferences.

- The scale of LLM training is immense, requiring specialized hardware like GPUs.

- Model behavior is an emergent phenomenon of billions of tuned values, making the exact rationale for specific predictions challenging to ascertain.

Technical Details

How LLMs Predict Text

LLMs operate by predicting the most probable next word in a sequence. When interacting with an LLM, such as a chatbot, the model continuously generates words based on the input text and its prior predictions. Unlike deterministic systems, LLMs assign a probability distribution to all possible next words, allowing them to select less likely words at random. This probabilistic selection introduces variability, meaning a given prompt can yield different outputs each time it runs, contributing to more natural-sounding responses.

The Transformer Architecture

Introduced in 2017, the Transformer architecture revolutionized language models by enabling parallel processing of text. This contrasts with older models that processed text word-by-word. Key components include:

- Word Embeddings: Each word is first associated with a continuous list of numbers, encoding its meaning. This numerical representation is crucial as training processes work exclusively with continuous values.

- Attention Mechanism: This unique operation allows these numerical representations to interact and refine their encoded meanings based on the surrounding contextual words. For instance, the representation for “bank” adjusts to reflect whether the context implies a financial institution or a riverbank. This refinement occurs in parallel across all words.

- Feed-Forward Neural Networks: These networks provide additional capacity for the model to store and leverage complex language patterns learned during training.

Data flows through multiple iterations of these operations, enriching the numerical representations until a final function processes the last vector to produce a probabilistic prediction for the next word.

Training LLMs: From Pre-training to Refinement

LLM training is a two-phase, computationally intensive process.

Pre-training

Pre-training involves exposing the model to an enormous volume of text data, typically trillions of examples sourced from the internet. This phase aims to enable the model to auto-complete random passages of text.

- Parameters: The “large” in LLM refers to the hundreds of billions of continuous values (parameters or weights) that entirely determine the model’s behavior. These parameters are initially random.

- Process:

- The model receives an example text with the last word omitted.

- It predicts the missing last word.

- The prediction is compared to the true last word.

- The backpropagation algorithm then tweaks all parameters, making the model more likely to choose the correct word and less likely to choose incorrect ones in future predictions.

- Scale: The computational scale of pre-training is immense. For example, training the largest LLMs can involve operations equivalent to over 100 million years of computation for a machine performing one billion operations per second. This necessitates GPUs, special computer chips optimized for parallel processing.

Reinforcement Learning with Human Feedback (RLHF)

While pre-training creates a powerful auto-completer, it doesn’t guarantee helpful or aligned assistant behavior. RLHF addresses this by:

- Human Correction: Human workers evaluate model outputs, flagging unhelpful, biased, or problematic predictions.

- Parameter Refinement: These human corrections serve as feedback, further refining the model’s parameters to align its predictions with user preferences and desired assistant qualities.

The Challenge of Interpretability

Despite the intricate design, the specific behavior of an LLM is an emergent phenomenon resulting from the tuning of billions of parameters during training. This makes it incredibly challenging for researchers to pinpoint the exact reasons why a model makes a particular prediction, even as the generated outputs demonstrate remarkable fluency and utility.